How to Be an Advanced Test Analyst in One Day: Peter Deng

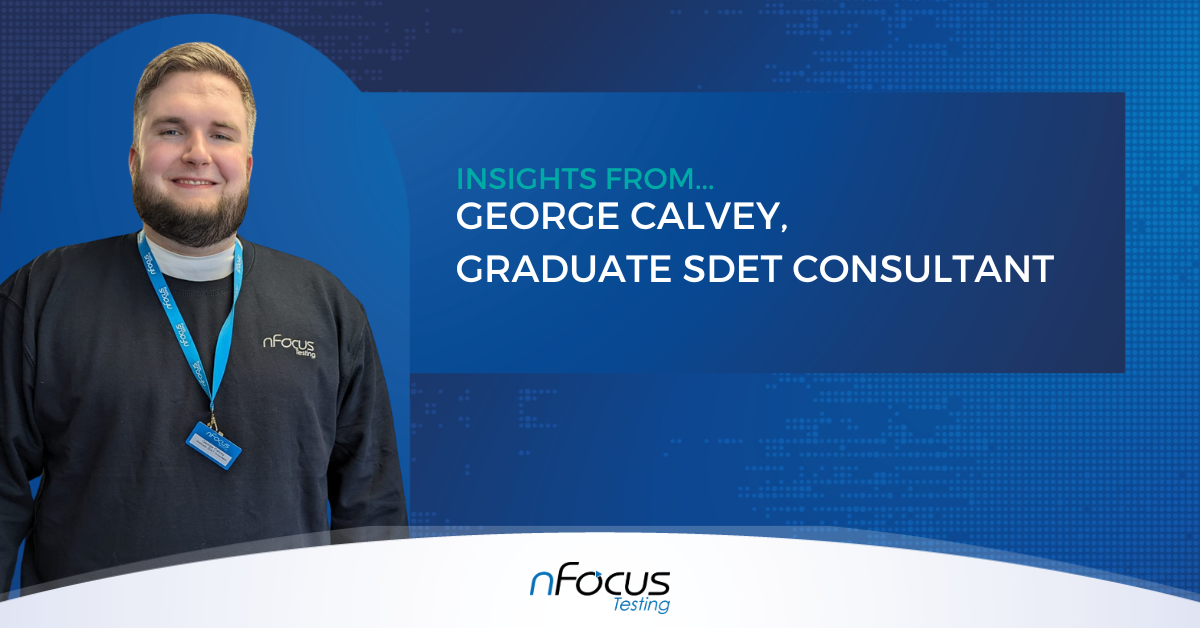

My name is Peter Deng, and I am a SDET consultant at nFocus, where I have been working for just over two and a half years. For the first two years of my work, I was a graduate student going into nFocus’ SDET academy, trained under an instructor in software testing, agile, and automation.

In that time, I undertook two tests with ISTQB, the board for certified testers, and achieved an average of 90% across both, passing with flying colours. After working for a client for two years, it was time for more training. Therefore, it made sense that the next step for me was to take the Advanced Test Analyst course. Read on as I explain my experiences and tips for future test takers, either for ATA or for any other ISTQB exam.

.jpg)

.jpg)

-1.png)

-1.png)

.png)